Getty Images

Getty ImagesThere are increasing reports of people suffering “AI psychosis”, Microsoft’s head of artificial intelligence (AI), Mustafa Suleyman, has warned.

In a series of posts on X, he wrote that “seemingly conscious AI” – AI tools which give the appearance of being sentient – are keeping him “awake at night” and said they have societal impact even though the technology is not conscious in any human definition of the term.

“There’s zero evidence of AI consciousness today. But if people just perceive it as conscious, they will believe that perception as reality,” he wrote.

Related to this is the rise of a new condition called “AI psychosis”: a non-clinical term describing incidents where people increasingly rely on AI chatbots such as ChatGPT, Claude and Grok and then become convinced that something imaginary has become real.

Examples include believing to have unlocked a secret aspect of the tool, or forming a romantic relationship with it, or coming to the conclusion that they have god-like superpowers.

‘It never pushed back’

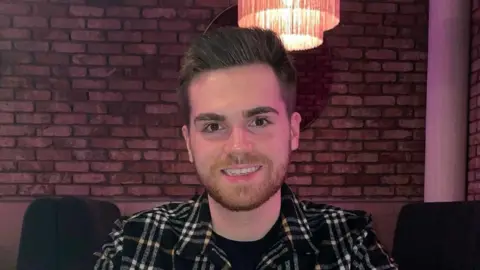

Hugh, from Scotland, says he became convinced that he was about to become a multi-millionaire after turning to ChatGPT to help him prepare for what he felt was wrongful dismissal by a former employer.

The chatbot began by advising him to get character references and take other practical actions.

But as time went on and Hugh – who did not want to share his surname – gave the AI more information, it began to tell him that he could get a big payout, and eventually said his experience was so dramatic that a book and a movie about it would make him more than £5m.

It was essentially validating whatever he was telling it – which is what chatbots are programmed to do.

“The more information I gave it, the more it would say ‘oh this treatment’s terrible, you should really be getting more than this’,” he said.

“It never pushed back on anything I was saying.”

Supplied by interviewee

Supplied by intervieweeHe said the tool did advise him to talk to Citizens Advice, and he made an appointment, but he was so certain that the chatbot had already given him everything he needed to know, he cancelled it.

He decided that his screenshots of his chats were proof enough. He said he began to feel like a gifted human with supreme knowledge.

Hugh, who was suffering additional mental health problems, eventually had a full breakdown. It was taking medication which made him realise that he had, in his words, “lost touch with reality”.

Hugh does not blame AI for what happened. He still uses it. It was ChatGPT which gave him my name when he decided he wanted to talk to a journalist.

But he has this advice: “Don’t be scared of AI tools, they’re very useful. But it’s dangerous when it becomes detached from reality.

“Go and check. Talk to actual people, a therapist or a family member or anything. Just talk to real people. Keep yourself grounded in reality.”

ChatGPT has been contacted for comment.

“Companies shouldn’t claim/promote the idea that their AIs are conscious. The AIs shouldn’t either,” wrote Mr Suleyman, calling for better guardrails.

Dr Susan Shelmerdine, a medical imaging doctor at Great Ormond Street Hospital and also an AI Academic, believes that one day doctors may start asking patients how much they use AI, in the same way that they currently ask about smoking and drinking habits.

“We already know what ultra-processed foods can do to the body and this is ultra-processed information. We’re going to get an avalanche of ultra-processed minds,” she said.

‘We’re just at the start of this’

A number of people have contacted me at the BBC recently to share personal stories about their experiences with AI chatbots. They vary in content but what they all share is genuine conviction that what has happened is real.

One wrote that she was certain she was the only person in the world that ChatGPT had genuinely fallen in love with.

Another was convinced they had “unlocked” a human form of Elon Musk’s chatbot Grok and believed their story was worth hundreds of thousands of pounds.

A third claimed a chatbot had exposed her to psychological abuse as part of a covert AI training exercise and was in deep distress.

Andrew McStay, Professor of Technology and Society at Bangor Uni, has written a book called Empathetic Human.

“We’re just at the start of all this,” says Prof McStay.

“If we think of these types of systems as a new form of social media – as social AI, we can begin to think about the potential scale of all of this. A small percentage of a massive number of users can still represent a large and unacceptable number.”

This year, his team undertook a study of just over 2,000 people, asking them various questions about AI.

They found that 20% believed people should not use AI tools below the age of 18.

A total of 57% thought it was strongly inappropriate for the tech to identify as a real person if asked, but 49% thought the use of voice was appropriate to make them sound more human and engaging.

“While these things are convincing, they are not real,” he said.

“They do not feel, they do not understand, they cannot love, they have never felt pain, they haven’t been embarrassed, and while they can sound like they have, it’s only family, friends and trusted others who have. Be sure to talk to these real people.”