Two new experiments show that most people do not even consider that a personal message could be AI-generated, even when they themselves use artificial intelligence to write.

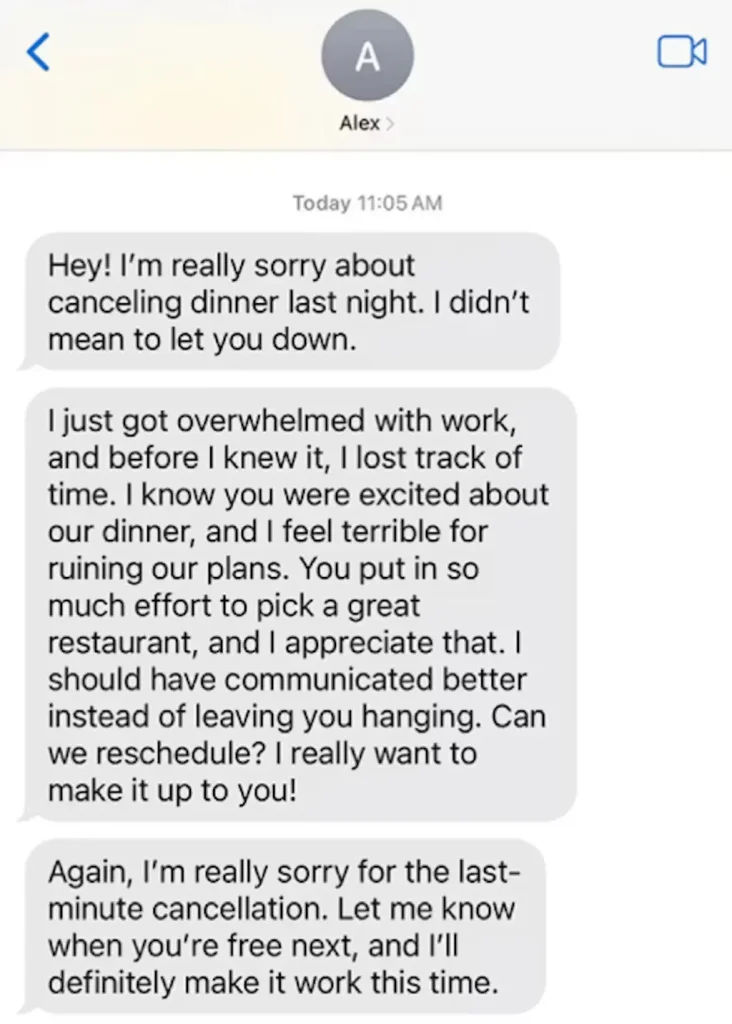

To see how people judge someone based on their writing in the age of ChatGPT, my colleague Jiaqi Zhu and I recruited more than 1,300 U.S.-based participants, ages 18 to 84, and showed them AI-generated messages like an apology sent in an email. We split our volunteers into four groups: Some people saw the messages with no information about who or what wrote them, as in everyday life. Others were told the messages were definitely written by a human, definitely AI-generated, or that the source could be either.

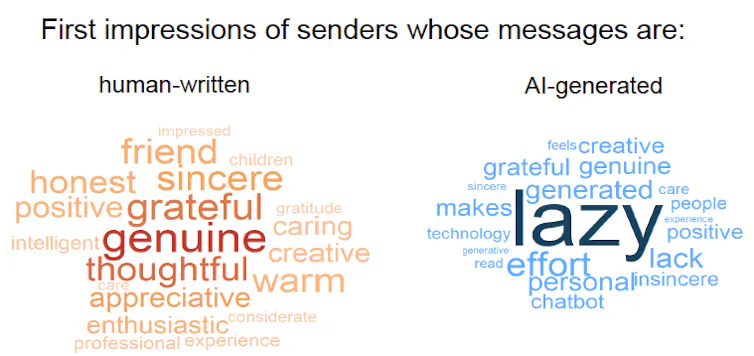

We found a clear “AI disclosure penalty.” When people knew a message was AI-generated, they rated the sender much more negatively (“lazy,” “insincere,” “lack of effort”) than when they believed that the same text was written by a person (“genuine,” “grateful,” “thoughtful”).

But here’s the twist: The participants who were not told anything about authorship formed impressions that were just as positive as those from people who were told the messages were genuinely human.

This complete lack of skepticism surprised us—and it raises new questions. Maybe participants were not familiar enough with AI to realize that today’s models can produce detailed and personal messages. (They can.) Or perhaps participants have never used AI themselves. (They likely have.) So we also tested whether participants’ own AI use changed how they judged senders.

To our even bigger surprise, we found little to no effect. People who use generative AI quite frequently in their daily lives—at least every other day—did penalize AI use slightly less when AI authorship was disclosed, compared with people who never or rarely use AI. But participants were no more skeptical by default: When authorship was not disclosed, heavy AI users, light AI users, and nonusers all tended to assume the text was written by a person and formed essentially the same impressions.

Why it matters

Lack of skepticism and a lack of negative impressions matter because people make social judgments from text all the time. Recipients consider taking the time and effort to send written messages as an insight into the writer’s sincerity, authenticity, or competence, and those impressions shape people’s decisions in friendships, dating, and work.

Yet our main findings reveal a striking disconnect: People usually don’t suspect AI use unless it is obvious. This unawareness creates a moral dilemma: People who use AI in secret can enjoy the benefits while facing almost no risk of detection. Meanwhile, paradoxically, people who are up front and admit to using AI suffer a reputational hit.

Over time, a lack of skepticism and awareness could reshape what writing means in everyday life. Readers might learn to treat writing as a less reliable signal of someone’s character or effort, and instead rely on other forms of communication. For example, widespread AI use has already prompted employers to discount the value of cover letters from job applicants. Instead, they’re relying more on personal recommendations from an applicant’s current supervisor or connections made through in-person networking.

What other research is being done

Other researchers have documented a wide range of negative impressions about people who disclose their AI use. Studies show it makes job applicants seem less desirable and employees seem less competent. Readers of creative writing perceive AI users as less creative and inauthentic. People see personal apologies and corporate apologies that stem from AI as less effective. In general, disclosing AI use decreases trust and undermines legitimacy.

Yet without disclosure, there is clear evidence that most people cannot reliably detect AI-generated text, even with the help of detection tools, especially when the text is a mix of human-written and AI-generated content. Even when people feel confident about their ability to spot AI text, their confidence may be nothing more than a self-affirming illusion.

What’s next

Even though our experiments did not reveal suspicion of AI use, that doesn’t mean people never suspect it in the real world. In some settings, people may already be hypervigilant about AI. Use in academia is an obvious example. In our next studies, we want to understand when and why people naturally start to suspect AI use, and what flips the switch between trust and doubt.

Until then, if you want your personal message to be judged as heartfelt, the safest strategy may be to make a phone call, leave a voicemail, or, better yet, say it in person.

The Research Brief is a short take on interesting academic work.

Andras Molnar is an assistant professor of psychology at the University of Michigan.

This article is republished from The Conversation under a Creative Commons license. Read the original article.